Hazem Ibrahim

Ph.D. Candidate in Computer Science at NYU

hazem.ibrahim [at] nyu.edu

I'm a PhD candidate in Computer Science at NYU, where I

study how algorithms, data, and institutions create

systematic inequalities in who is seen, heard, and

valued. My work treats bias in socio-technical

systems—social-media platforms, large language

models, academic publishing—not as a bug in a

single model but as an emergent property of tightly

coupled computational and social processes.

My research is organized around three mechanisms:

visibility bias (who sees what

information),

representational bias (how groups are

portrayed), and institutional bias (how

credit, access, and evaluation are allocated). I study

these through an end-to-end approach that moves from

measurement—building large-scale datasets and

quantifying inequalities—to

causation—designing field experiments and

algorithmic audits that isolate how platform rules and

human decisions jointly produce bias—to

mitigation—proposing algorithmic and policy

interventions. This work spans two empirical domains:

social media and LLMs (auditing recommendation

algorithms on YouTube and TikTok, measuring the

political behavior of LLMs) and academia and

bibliographic systems (uncovering citation manipulation

and demonstrating racial and institutional biases in

access to scientific knowledge).

My findings have been published in Nature, PNAS Nexus,

Scientific Reports, and IEEE, and covered by outlets

including Nature, Science, Scientific American, The

Guardian, The Telegraph, and The Times. I was named to

MIT Technology Review's Innovators Under 35 list in

2023. Prior to my PhD, I earned an M.Sc. from the

University of Toronto and a B.Sc. from NYU Abu Dhabi.

Publications

Published

Authors

Hazem Ibrahim, Jang, H. D., AlDahoul, N., Kaufman, A. R., Rahwan, T., and Zaki, Y.

Summary

Using 323 bot-driven audits over six months, this study reveals systematic partisan content skews in TikTok's recommendation algorithm during the 2024 U.S. presidential race. The platform exhibited measurable political bias in the content it surfaced to users.

2. Analyzing political stances on Twitter/X in the lead-up to the 2024 U.S. election

PoliticalNLP Workshop at EACL (2026)

Authors

Hazem Ibrahim, Khan, F., T., Rahwan, T., and Zaki, Y.

Summary

This study analyzes 1,235 tweets from major U.S. political figures and 63,322 replies during the 2024 election using an LLM-based classification pipeline. Republican candidates authored significantly more criticism of the Democratic party than vice versa, while Republican-aligned users dominated reply activity across both parties' tweets. Key political events triggered measurable shifts in the ideological positioning of public discourse.

3. Citation manipulation through citation mills and pre-print servers

Scientific Reports (2025)

Authors

Hazem Ibrahim, Liu, F., Zaki, Y., and Rahwan, T.

Summary

Through an undercover sting operation, this study provides conclusive evidence that academic citations can be purchased in bulk through citation boosting services. The bought citations appeared in a Scopus-indexed journal, revealing a systematic vulnerability in scholarly publishing integrity. The findings were covered by Nature and Science.

4. Heritage Language Maintenance: The Case of Bangladeshi Immigrants in Canada

Interaction Design and Architecture(s) Journal (IxD&A) (2025)

Authors

Hazem Ibrahim, Sabie, D., Roy, P., Bhattacharjee, A., Alam, S. M. R., Mim, N. J., and Ahmed, S. I.

Summary

This study interviews 20 Bangladeshi immigrant parents in Canada to explore the challenges they face in maintaining their children's heritage language, Bangla. The findings reveal cultural tensions, economic constraints, and infrastructural barriers to heritage language learning. Design implications are proposed for technologies supporting heritage language maintenance in immigrant communities.

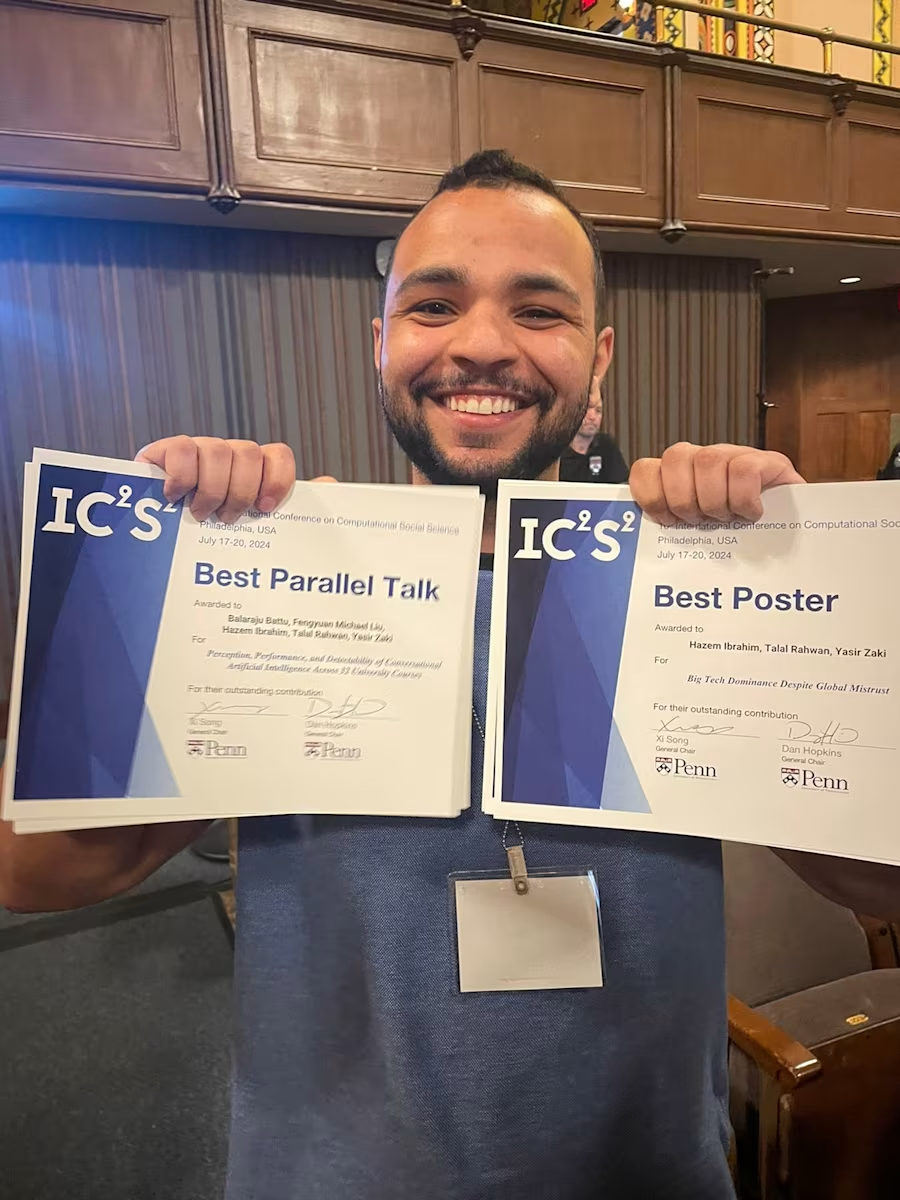

5. Big tech dominance despite global mistrust

IEEE Transactions on Computational Social Systems (2024)

Authors

Hazem Ibrahim, Debicki, M., Rahwan, T., and Zaki, Y.

Summary

This study examines global attitudes toward major technology companies, finding that despite widespread mistrust, big tech firms maintain market dominance. The research spans multiple countries and analyzes the disconnect between public sentiment and continued user dependence on these platforms.

6. YouTube's recommendation algorithm is left-leaning in the United States

PNAS Nexus (2023)

Authors

Hazem Ibrahim, AlDahoul, N., Lee, S., Rahwan, T., and Zaki, Y.

Summary

Through a large-scale algorithmic audit, this study reveals that YouTube's recommendation algorithm exhibits a left-leaning political bias in the United States. The findings challenge prior assumptions about the platform's role in political radicalization and contributed to public debate about algorithmic neutrality.

7. Rethinking homework in the age of artificial intelligence

IEEE Intelligent Systems (2023)

Authors

Hazem Ibrahim, Asim, R., Zaffar, F., Rahwan, T., and Zaki, Y.

Summary

This paper examines the implications of conversational AI systems like ChatGPT for university homework assignments. It argues that traditional homework paradigms need rethinking given AI's growing capabilities and proposes alternative assessment approaches for the age of generative AI.

8. Perception, performance, and detectability of conversational artificial intelligence across 32 university courses

Scientific Reports 13, 12187 (2023)

Authors

Hazem Ibrahim, Liu, F., Asim, R., Battu, B., Benabderrahmane, S., Alhafni, B., Adnan, W., Alhanai, T., AlShebli, B., Baghdadi, R., et al.

Summary

This study evaluates ChatGPT's performance across 32 university courses, finding it achieved comparable or superior grades to students in many cases. The research also assesses the detectability of AI-generated submissions, revealing that existing detection tools are largely ineffective when simple paraphrasing techniques are applied.

9. I tag, you tag, everybody tags!

ACM IMC (2023)

Authors

Hazem Ibrahim, Asim, R., Varvello, M., and Zaki, Y.

Summary

This study evaluates the tracking performance of Apple AirTags and Samsung SmartTags across six countries over 120 days. Both tags achieve similar accuracy, locating objects within 100 meters in about 10 minutes. Half of a person's movements can be backtracked with 10-meter accuracy after just one hour, raising significant privacy concerns.

10. Gamification in online educational systems

6th International Conference on Higher Education Advances (HEAd'20) (2020)

Authors

Hazem Ibrahim and Ibrahim, W.

Summary

This paper reviews the application of gamification in online educational systems and its impact on student motivation and retention. While gamification initially boosts engagement, the effect diminishes as students become familiar with the system. Personalization of the gamified experience has been shown to sustain motivation over longer periods.

11. Multithreaded and reconvergent aware algorithms for accurate digital circuits reliability estimation

IEEE Transactions on Reliability (2018)

Authors

Ibrahim, W., and Hazem Ibrahim

Summary

This paper introduces algorithms for estimating digital circuit reliability that account for reconvergent fan-out effects and use multithreading for efficiency. The proposed methods are as accurate as Bayesian network approaches while being up to five orders of magnitude faster, enabling practical reliability analysis of large-scale circuits.

Under Review

12. Causal evidence of racial and institutional biases in accessing paywalled articles and scientific data

Revise and Resubmit at Science

13. Large language models are often politically extreme, usually ideologically inconsistent, and persuasive even in informational contexts

Revise and Resubmit at American Political Science Review

14. Inclusive content reduces racial and gender biases, yet non-inclusive content dominates popular media outlets

Under review at EPJ Data Science

15. Who Gets Seen in the Age of AI? Adoption Patterns of Large Language Models in Scholarly Writing and Citation Outcomes

Under review at Journal of Informetrics

16. A longitudinal analysis of racial and gender bias in New York Times and Fox News images and articles

Revise and Resubmit at ICWSM 2026

17. Neutralizing the Narrative: AI-Powered Debiasing of Online News Articles

Under review at Engineering Applications of Artificial Intelligence

18. A Tale of Three Location Trackers: AirTag, SmartTag, and Tile

Under review at IMC 2026

In Preparation

19. Structural inequalities in Hollywood representation across a century of film

In preparation

20. Two-thirds of citations to review papers belong to original research

In preparation

21. Measuring the Political Ideology of LLMs Across 90 Countries

In preparation

22. Examining propaganda on Telegram during the Russia/Ukraine War

In preparation

Teaching Experience & Service

Teaching and Guest Lectures

- Guest Lecturer, CS-UH 2219E – Computational Social Science – NYU Abu Dhabi (Spring 2026)

- Instructor, CS-UH 1001 – Introduction to Computer Science – NYU Abu Dhabi (Fall 2020, Summer 2021, Fall 2021)

- Instructor, CS-UH 1002 – Discrete Mathematics – NYU Abu Dhabi (Fall 2021, Spring 2022, Summer 2022)

- Instructor, CS-UH 1050 – Data Structures – NYU Abu Dhabi (Spring 2021, Fall 2021)

- Instructor, CS-UH 2214 – Database Systems – NYU Abu Dhabi (Fall 2021, Spring 2022)

- Instructor, CS-UH 2220 – Machine Learning – NYU Abu Dhabi (Spring 2022)

- Instructor, CS-UH 2219E – Computational Social Science – NYU Abu Dhabi (Spring 2021, Fall 2021)

- Teaching Assistant, CSC148H – Introduction to Computer Science – University of Toronto (Spring 2018, Fall 2018, Fall 2019)

Academic Advising

- Undergraduate Thesis Advisor – Tewoflos Girmay, Yana Holovatska (NYU Abu Dhabi, 2026)

- Undergraduate Thesis Advisor – Farhan Kamrul Khan, Chen Wei Kuo, and Kevin Chu (NYU Abu Dhabi, 2025)

Service

- Reviewer: PNAS Nexus, AAAI ICWSM, IC2S2, ACM WebConf (WWW), ACM CHI, ACM CSCW, and Scientometrics

Media Coverage and Awards

Systematic partisan content skews in TikTok during the 2024 U.S. elections

Using 323 independent bot-driven audits, we tracked changes in TikTok's recommendation algorithm in the six months prior to the 2024 US presidential race. Our findings were covered by Nature, The Guardian, The Telegraph, El Pais, Der Standard, and NextShark.

Best Poster Award at AI4GS 2025

I was awarded the Best Poster Award for my poster on investigating racial and institutional biases in accessing paywalled articles and scientific data.

ChatGPT and Homework

Our paper "Perception, Performance, and Detectability of Conversational Artificial Intelligence Across 32 University Courses" evaluated ChatGPT's ability to solve homework assignment. It was covered by news outlets worldwide: Scientific American, The Times, The Independent, Nature Asia, Government Tech, Daily Mail, The Daily Beast, New Scientist, EurekAlert!, Phys.org, The National, Neuroscience News, Nature Middle East.

Citation manipulation

We went under cover, contacted a "citation boosting service", and managed to buy citations that appeared in a Scopus-Indexed journal. Our sting operation provided conclusive evidence that citations can be bought in bulk. The findings were covered by Nature and Science.

YouTube's recommendation algorithm is left-leaning in the United States

Our paper "YouTube's recommendation algorithm is left-leaning in the United States" revealed a political bias in YouTube's algorithm. The paper was published in PNAS Nexus, and received media coverage from Daily Caller, American Council on Science and Health, The College Fix, PsyPost.

MIT Innovator Under 35 Award

I was awarded the MIT Innovator Under 35 Award in 2023 for my work on large language models and its impact on university education.